Publication date: March 31, 2026

Japan Data Center Update 12: Data Center Expansion Drives Infrastructure Upgrades and New Deployment Models

TEPCO PG Expands Inzai Substation as Data Center Demand Surges in Chiba

Inzai City hosts dozens of data centers, including facilities operated by Google, and electricity demand from data centers is rapidly accelerating. Applications indicate that demand could reach six times the FY2017 level by FY2027. A plan by Daiwa House Industry to construct 14 new buildings near the substation exemplifies this trend.

To respond, Tokyo Electric Power Company Power Grid (TEPCO PG) developed the Chiba Inzai Substation in Inzai City. The substation began operations in June 2024, with two transformers currently in service. Installation of the third and fourth transformers is underway, with operations scheduled to begin in May 2026 and in 2027, respectively. TEPCO PG is also considering the development of an additional substation in Inzai.

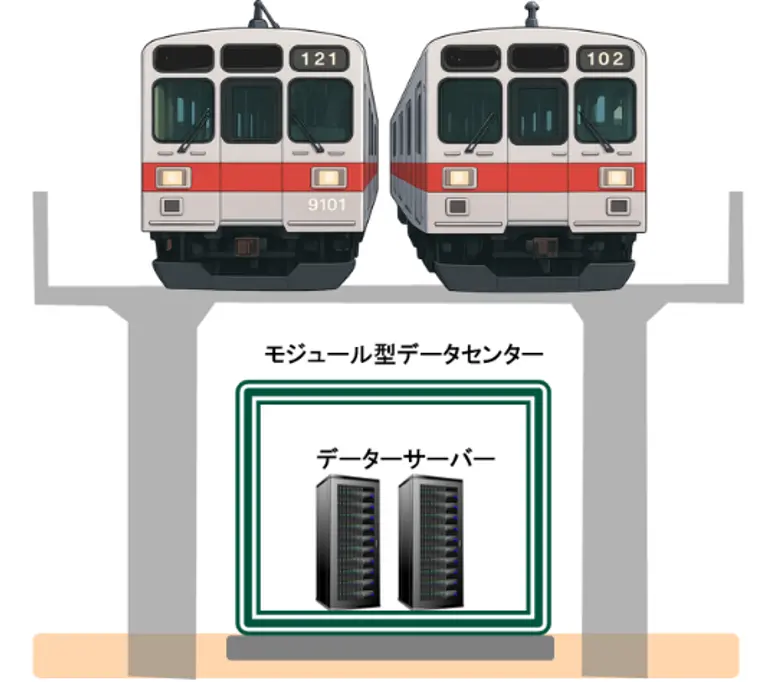

Tokyu Tests Modular Urban Data Centers Under Railway via Ducts in Tokyo

Tokyu Corporation, Tokyu Railways, its Communications, and Tokyu Construction will begin a demonstration project in June 2026 to assess the deployment of urban data centers beneath railway viaducts along the Tokyu line. The project aims to deploy compact facilities in a distributed manner across urban areas where data demand is concentrated. It will leverage the advantage of directly utilizing the high-capacity fiber-optic network already installed along the Tokyu line.

In the demonstration, a “modular small-scale data center” will be installed beneath the elevated tracks of the Oimachi Line. The project will measure the impact of the unique under-viaduct environment on servers, as well as the performance of server enclosures in terms of sound insulation, thermal insulation, vibration isolation, and cooling.

Tohoku Electric and Cisco Partner on Distributed AI Data Center Network Design

Tohoku Electric Power is positioning itself to capture AI data center revenue, but the architecture it’s pursuing comes with a genuine technical challenge.

The utility signed an MOU with Cisco Systems to develop network designs for distributed AI data centers: multiple smaller facilities connected across sites rather than a single hyperscale campus. The approach lets Tohoku leverage existing grid infrastructure and land availability across its service territory, but creates a problem that centralized DCs don’t face.

GPU clusters running AI workloads are latency-sensitive. When you split compute across geographically separated sites, the network connecting them becomes a bottleneck as data shuffling between nodes can stall training jobs or degrade inference performance. The partnership aims to develop standardized designs that maintain performance while keeping costs and complexity manageable.